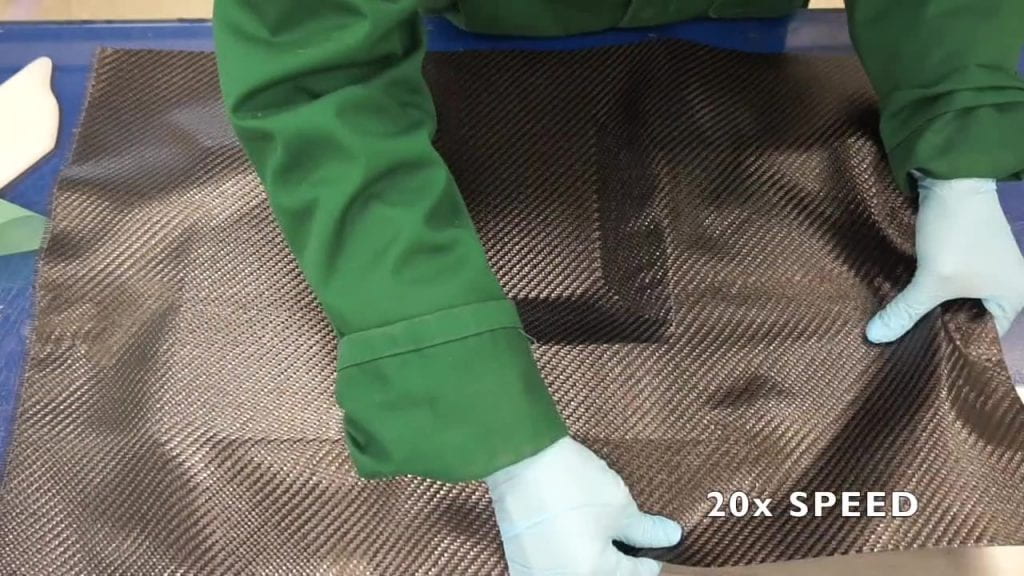

Toward the end of 2019 LayupRITE started its follow-on Ufi funded project. The main aims of this project, titled “LayupRITE101: Augmented Training for Composites”, was intended to integrate the LayupRITE system into a more complete training course. For this project, LayupRITE would be used to augment the teaching of the NCC’s “Introduction to Manual Prepreg Layup” course. This 2-day course gives both theoretical and hands-on instruction in the manufacture of prepreg components by manual layup. This includes safety, storage, materials, tooling, layup, consolidation, and finishing operations. Along with an e-learning component designed to enhance the current classroom-based course notes, LayupRITE simulations would be used to demonstrate theory points and guide the workshop tasks.

Feedback from Ufi Project 1

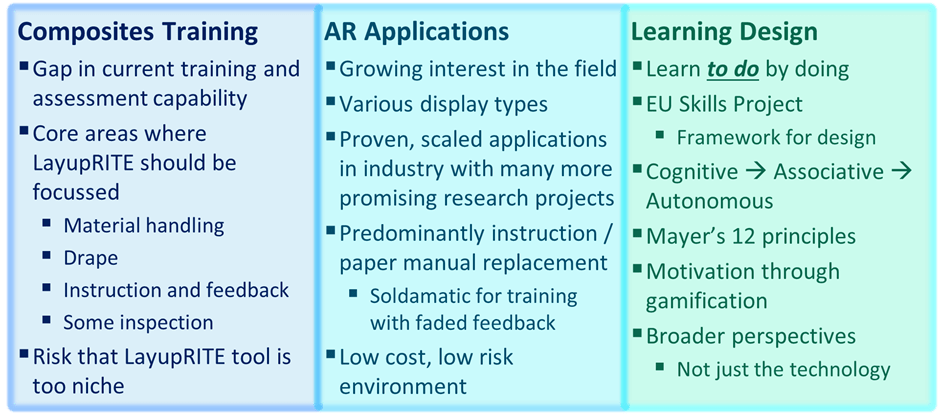

The first Ufi-funded project gave the LayupRITE developers a lot of useful feedback and learning points. Some of the points related to “what good looks like” for augmented reality backed learning, others related to cost and form-factor concerns.

In terms of “what good looks like” that was missing from the first project, the key element was in being able to make mistakes for practice. The drape algorithm solved the drape for the entire net, meaning that the user could only really change the start point. This was still useful as the start point in a layup generally determines the final ply outline and was taken as a learning accelerator. Project 1 also demonstrated that, without guidance, people will use a variety of starting locations.

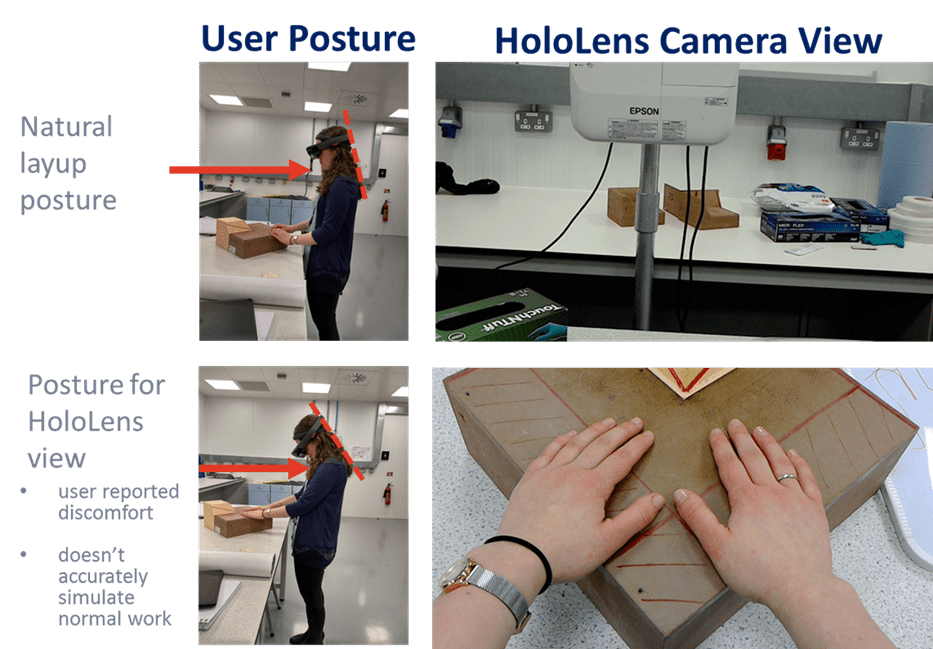

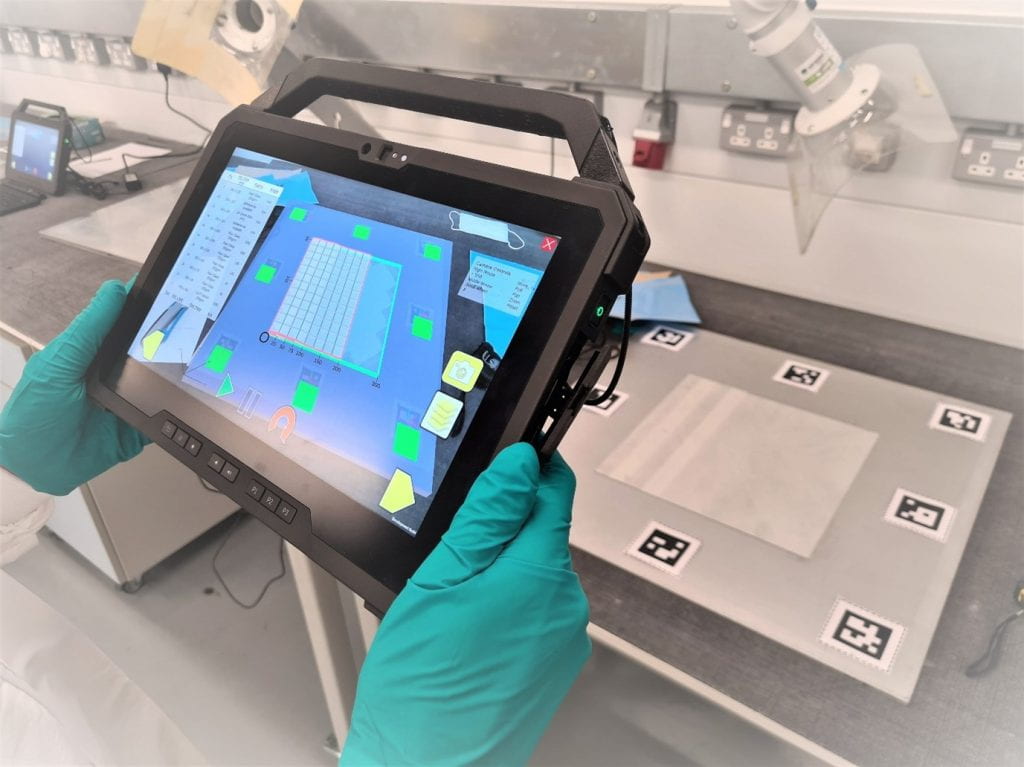

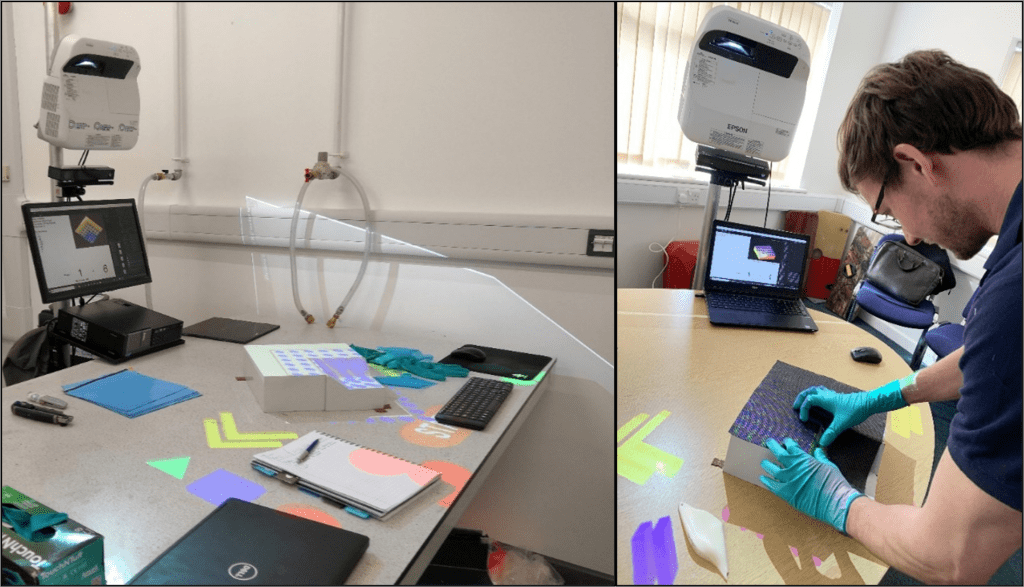

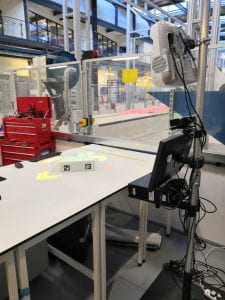

Feedback from our target customers, further education institutes, was that the projector-based system would either be too costly or too bulky for the environments they would use it in. Additionally, during development there were issues with setup time and calibration, as well calibrating between setups. As a result of these concerns, the form-factor and AR delivery for LayupRITE101 was chosen as tablet-based, pass-through AR. The intention from the developer was that the same computer could be used in both the classroom and the workshop to guide the exercise.

LayupRITE101 Drape Simulation

“Facilitating deliberate practice”

The Pin-Jointed Net method for a kinematic drape solves the drape for the entire net and reports back the resulting net shape and shear angle. Previous work [1] has shown that drape simulation doesn’t necessarily match the feature-by-feature approach employed by laminators. Additionally, whilst it quickly solves the drape of a virtual net with next to no initial input, save for a start point in our case, it doesn’t allow the user to introduce (deliberately or otherwise) defects. The method also lacks the interactivity which benefits learning.

The pin-jointed net assumption does lend itself to quickly trialling start points. Start point is a key accelerator in learning how composite plies drape. As such, it was made into its standalone lesson “module”. The 4 lesson modules developed for the course will be discussed later.

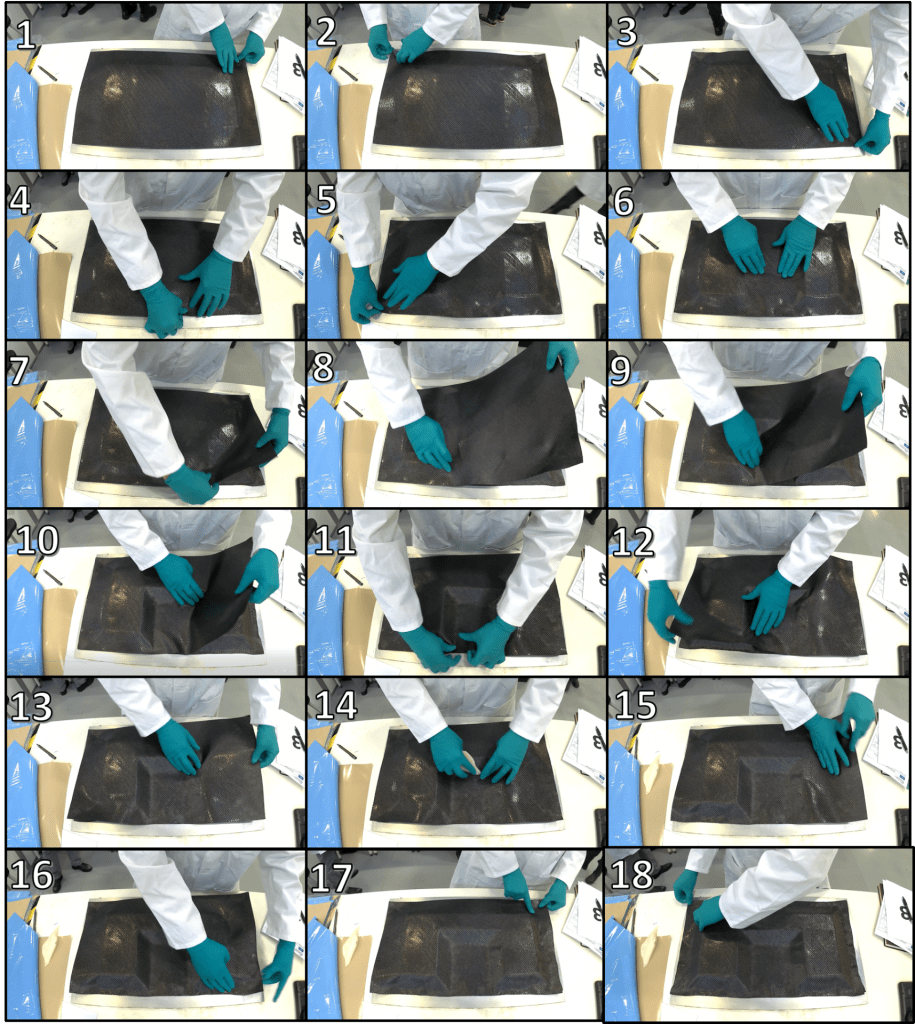

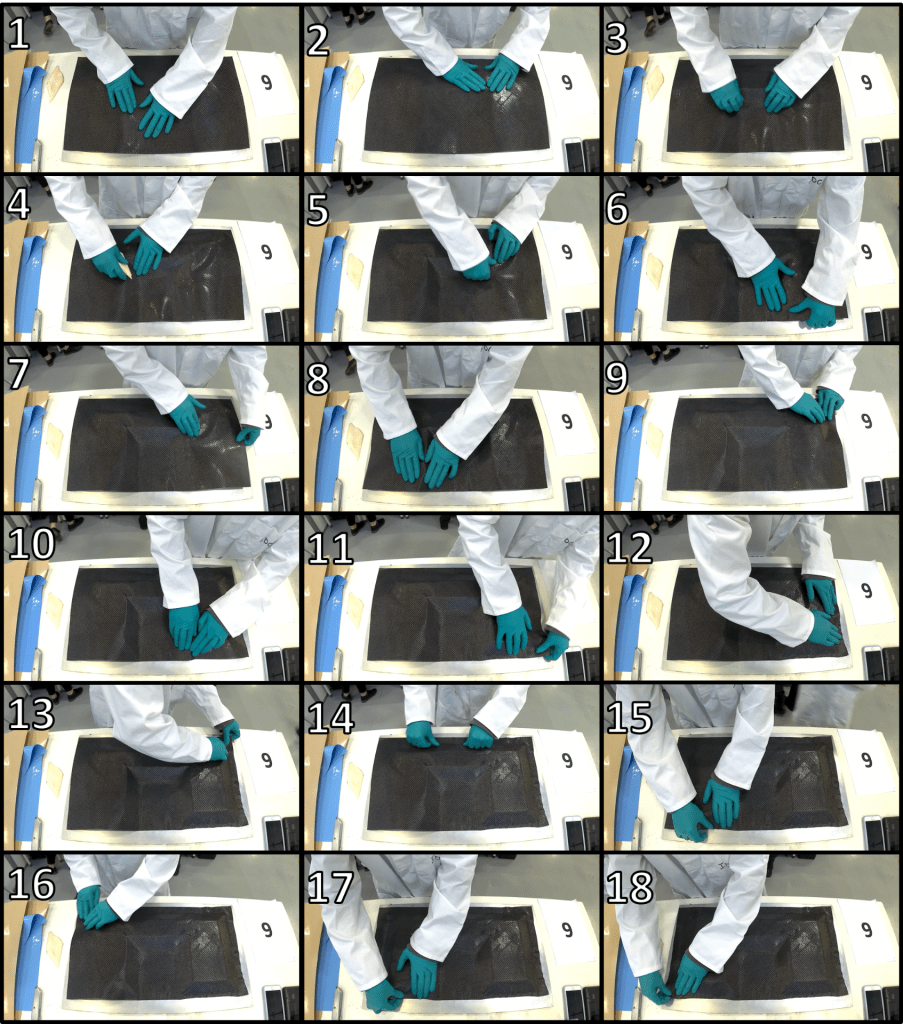

What was useful for this project was the development of a simulation that could show how the net should deform in real time. What would be even more beneficial would be a net that could be interacted with, so a user could practice draping a virtual net. This virtual net would differ from the automatically solving net in that the user would be able to interact with it and attempt to drape it in the more natural feature-by-feature style. This would require creating a new type of manually controlled virtual net in the Unity environment.

What this manual net gave us was a net where nodes could be fixed in space or moved. What this enabled was in pre-shaping or folding the virtual net prior to placing it on the tool.

By fixing some nodes and moving others the net was also able to demonstrate another key learning point for composite drape: bias-extension shear. Fibres are strong, that’s the point, so pulling in the fibre direction won’t allow you to deform, shear and shape the net. Pulling in the direction off the fibre axis (the “bias” direction) is how a laminator can shear the net to make it conform to the mould tool. This was also a key learning accelerator and was made into its own module.

Drape Simulation Modules

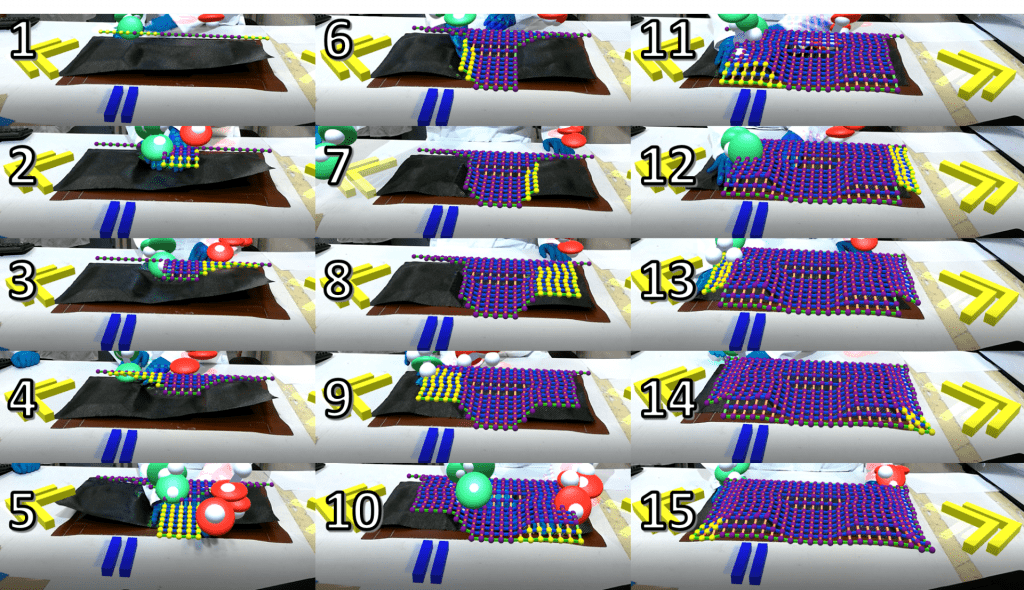

In total 4 modules were developed and trialled within LayupRITE101In addition to the two accelerators mentioned earlier, start point and bias extension, there was a “free practice” module and the workshop exercise.

Bias-Extension and Shear

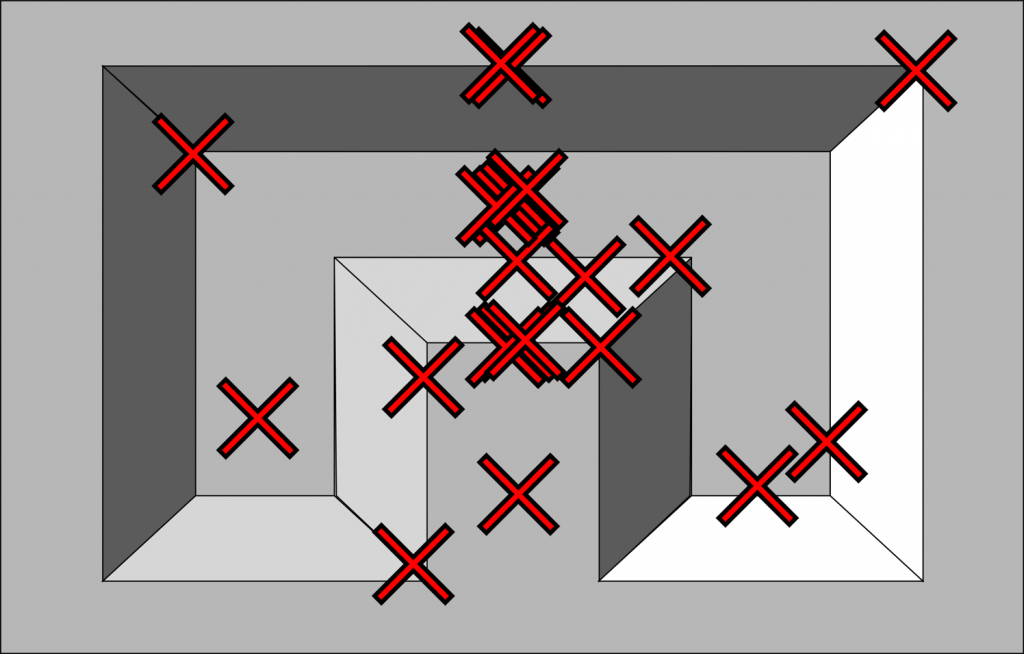

The bias-extension less is a quick (under 5 minute) exercise to demonstrate the learning accelerator of the same name. The final version is compatible with both mouse and touch controls and shows the shear increasing by the net changing colour and eventually displaying an “X”-shaped symbol when the cell is over-sheared. The exercise demonstrates pulling the edges and corners on both 0°/90° and ±45° nets.

Start Point

This exercise uses the automatically draping nets to compare starting points. The user uses the mouse or touch to select the starting node and tap/click to begin the drape. There is a text readout of some net statistics (net fibre angle, max shear, average shear, % over-shear) which compares the last two nets positioned. Additionally, the graphic will show the outlines of both nets and the full shear mesh of the topmost net.

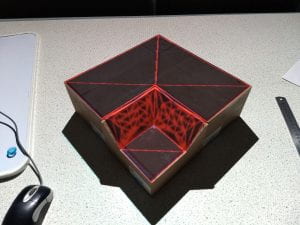

Workshop Exercise

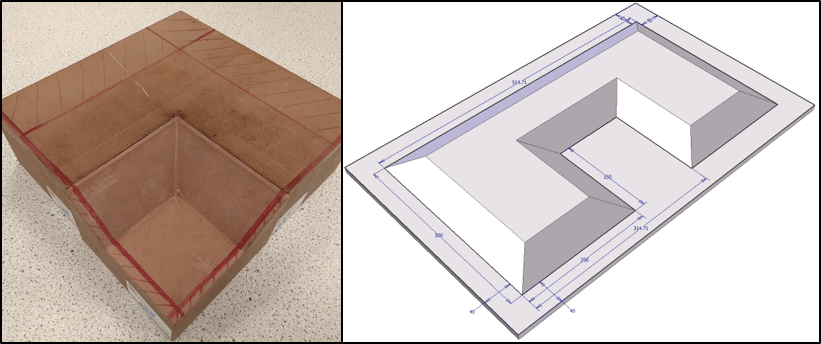

This module uses script controlled manual nets to give step-by-step instructions for the workshop exercise. This includes ply order, orientation, location, location of any joints/overlaps, material type, and cores/adhesives etc. The module also has an exploded view for easy visualisation and is intended to effectively replace the paper ply book and work instruction. The module also included an AR function where the virtual plies would line up relative to printed AR markers positioned on the tool. Feedback from users was very positive about the “virtual ply book” aspect of the module and less positive about the AR aspects/implementation. However, this module gave confidence in the whole system and possible expansion routes for LayupRITE.

VFP Basic – Free Practice

Unlike the other modules, VFP Basic doesn’t have specific guidance and is intended as a practice area for users. Tools (in *.stl format) could be loaded in and automatically sized nets in any fibre orientation could be created. This includes both automatically and manually draped nets and their shear meshes would be displayed visually in the program. Nodes can be fixed in space, selected, and moved or left as “normal” with their movement/position to be solved by the simulation. When a node contacts the tool surface it sticks and becomes “draped”. To lift the stuck node off the tool there is an “undo” tool. This is typically more challenging in the real world, but it allows users to make and fix mistakes, important for learning.

Trials

There were two sets of in-person trials planned, at the National Composites Centre and with partner further education institutions. Unfortunately, due to the global Coronavirus pandemic, these plans were severely curtailed. The NCC trials were still able to go ahead with masks and physical distancing, but the offsite trials had to be made virtual. This necessitated some additional software management, as the original plan had been to have the programs on NCC hardware, which the trial team would take away the end of the trial.

Respondents were asked to complete a survey and the LayupRITE system was generally well received. The top-level score out of 5 are summarised here:

| Modules | Rating (Out of 5) | Responses |

| Bias-Extension Lesson | 3.67 | 12 |

| Start Point Lesson | 4.17 | 13 |

| Workshop Session | 4.43 | 7 |

| VFP Exercise | 3.80 | 10 |

The workshop exercise received the most favourable feedback, with the VFP practice session ranked the lowest. The common response for this was that the VFP exercise lacked the proper context within the trial session. For most the sound design was also a point of negative feedback, with the quick fix being the inclusion of a mute button.

The AR implementation was also reviewed negatively and requires further development for its accuracy and ease-of-use. This was expected by the team given some hardware and development issues and is also seen as a fixable issue, given the proliferation of similar AR applications.

LayupRITE101 Conclusions

This project has allowed for great additional development for the LayupRITE simulation system and learning toward requirements for integrating into an existing course. The flexible software design and e-learning content mean that information can be easily extracted and repackaged into other courses, where relevant. The use of a digital ply book with an exploded view allowed the instructors to spend less time demonstrating and more time interacting with learners, this was a great outcome for the reasonably limited trials (due to the pandemic).

There is great potential for further developing the exercises and accelerators as standalone learning elements as well as further software improvements. The workshop exercise/virtual ply book has the potential to be developed into its own work instruction generation methodology and is a subject of further work and development.

At this point we would like to thank the Ufi Charitable Trust for funding and supporting this project as well as the National Composites Centre for facilities and trials help.